Blog

Insights about online education, learning technology, and platform updates from our team.

LoRA and QLoRA Explained — Fine-Tune LLMs Without Selling Your Kidney for GPUs

Full fine-tuning a 7B model needs 4x A100 GPUs. You have a free Colab notebook with 15GB of RAM. Game over? Not even close. LoRA and QLoRA let you fine-tune billion-parameter models on hardware you already have. Here's how they actually work.

How to Evaluate LLM Outputs — Beyond 'Looks Good to Me'

Your RAG pipeline returns an answer. It sounds confident. But is it actually correct? Turns out 'vibes-based evaluation' doesn't scale. Learn the metrics and frameworks that actually tell you if your LLM is hallucinating, missing context, or nailing it.

The LLM Interview Cheat Sheet — 10 Questions That Actually Come Up

You've used ChatGPT, built a RAG pipeline, maybe even fine-tuned a model. But can you explain how attention actually works when the interviewer asks? Here are 10 LLM questions that keep showing up in interviews — with answers that actually make sense.

Small Language Models Are Eating the World (And You Should Care)

Why TinyLlama, Phi, and Mistral 7B beat huge models for 95% of real-world tasks. The efficiency revolution is here.

Running LLMs on Your Laptop Without a $10K GPU

Practical guide to running production-ready LLMs locally using Ollama, llama.cpp, and quantization. No GPU cluster required.

Over-Reliance on LLMs for Coding Is the New Dunning-Kruger

When AI becomes a skill substitute instead of a skill amplifier. What to keep learning manually. The cost of convenience.

Why Your RAG App Gives Wrong Answers — And How to Actually Fix It

You built a RAG pipeline, connected a vector DB, and it still hallucinates. What gives? A deep dive into the failure modes hiding in your retrieval, chunking, and generation — and how to debug each one.

What Are Reasoning Models and Why Do They Think Before Answering?

o1, o3, DeepSeek R1 — a new breed of LLMs that literally pause to think. But what does 'thinking' mean for a model? Inside thinking tokens, chain-of-thought training, and why this changes everything about how LLMs solve problems.

The 'Lost in the Middle' Problem — Why LLMs Ignore the Middle of Your Context Window

You stuffed all the right documents into the prompt. The LLM still got the answer wrong. Turns out, language models have a blind spot — and it's right in the middle. Here's the research behind it and what you can do.

How LLMs Actually Generate Text — Temperature, Top-K, Top-P, and the Dice Rolls You Never See

You set temperature to 0.7 because a tutorial told you to. But do you know what that actually does? Under the hood of every LLM response is a probability game — here's how the dice are loaded.

Prompt Engineering vs RAG vs Fine-Tuning — It's Not a Ladder, It's a Decision Tree

Everyone says: start with prompting, then try RAG, then fine-tune. That advice is wrong. Here's how to actually choose the right LLM optimization strategy — based on your constraints, not a fixed sequence.

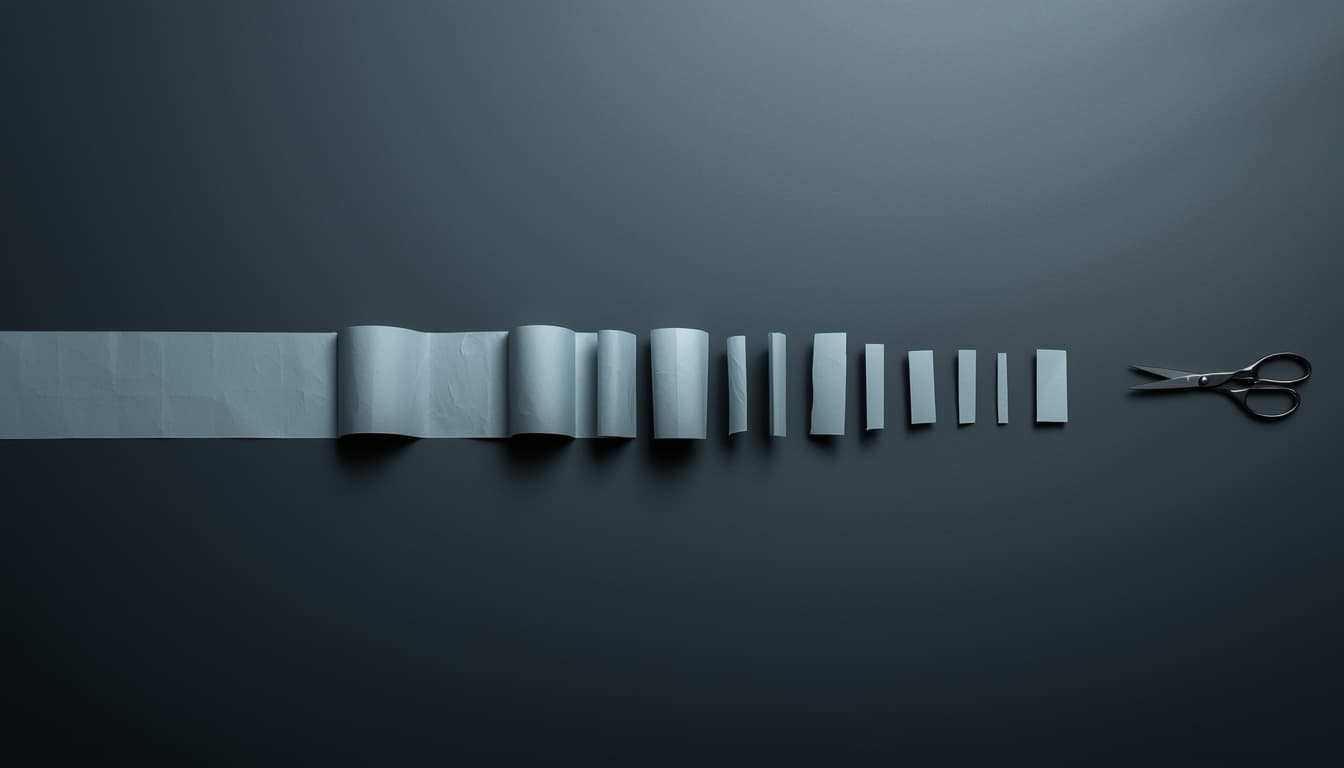

Chunking Strategies That Actually Work — Why Your RAG App Retrieves Garbage

Fixed-size, recursive, semantic — everyone has an opinion on the 'best' chunking strategy. The 2026 benchmarks are in, and the results will surprise you. Here's what actually works and why.

Your RAG Pipeline Is Retrieving Garbage — Here's How to Fix It with Hybrid Search and Reranking

You know RAG can fail. But do you know how to actually fix it? Beyond the basics — hybrid search, cross-encoder reranking, query decomposition, and contextual retrieval explained with real examples.

Build Your First MCP Server in Python — A Weekend Project That Actually Impresses

You've heard MCP is the 'USB-C for AI.' But what does it take to actually build one? A hands-on walkthrough of creating an MCP server from scratch using Python and FastMCP — with tools your LLM can call.

How AI Agents Actually Execute Multi-Step Tasks — The Orchestration Nobody Talks About

You asked the AI to 'book a flight and update the spreadsheet.' It did both. But how? A deep dive into the reasoning loop, tool calling, and orchestration patterns that make AI agents actually work.

What Is Model Context Protocol (MCP) — And Why It's Being Called USB-C for AI

Your AI agent can write code, but it can't read your database or send a Slack message without duct-tape integrations. MCP is the open standard that fixes this — here's how the protocol works, why it matters, and what it means for developers.